Beyond the Checkbox: Why Learning Design Must Shift from Vanity to Value Metrics

It is a familiar scene in almost every organization and classroom: a new training module is rolled out, learners click through the slides, pass a basic multiple-choice quiz, and the learning management system (LMS) registers a 100% completion rate. We celebrate the metric, check the box, and move on.

But as instructional designers and learning analysts, we have to ask the hard question: Did any actual learning take place?

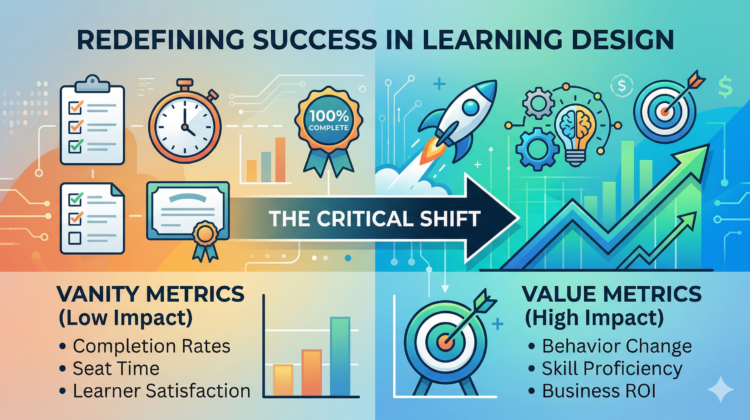

Much like setting up a controlled experiment in a science lab, effective learning design requires measurable, observable outcomes. Relying purely on completion rates is like measuring the success of an experiment by whether the equipment was turned on. To build truly effective learning ecosystems, the industry must pivot from superficial “vanity metrics” to performance-based “value metrics.”

Here is a deeper dive into why this shift is critical and how to implement it.

The Illusion of Progress: Understanding Vanity Metrics

Vanity metrics are data points that make an organization look good on paper but offer zero insight into behavioral change or business impact. They are the “check-box” approach to Learning and Development (L&D).

Common vanity metrics include:

Completion Rates: Tells us the learner navigated to the final screen.

Seat Time (Time in Module): Tells us the browser was open; it does not measure cognitive engagement.

Smile Sheets (Learner Satisfaction): Asking learners if they “liked” the training often correlates more with the instructor’s charisma or the media’s slickness than actual skill acquisition (Thalheimer, 2016).

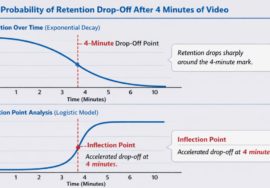

Initial Quiz Scores: Short-term recall of facts immediately after reading them is a poor predictor of long-term retention or on-the-job application (Dirksen, 2015).

When we optimize for vanity metrics, we inadvertently design learning experiences that prioritize speed and ease over challenge and growth.

The Proof of Impact: Shifting to Value Metrics

Value metrics, on the other hand, are the bedrock of data-driven instructional design. They measure the friction, the application, and the ultimate real-world impact of the learning intervention.

To prove the ROI of a learning program, we must track:

Time-to-Competency: How quickly can a learner transition from novice to independent practitioner in their actual environment?

Behavioral Application Rate: Are learners utilizing the new skills, tools, or job aids in the flow of their daily work?

Business Impact (Level 4 Evaluation): Did the training solve the systemic issue it was designed to address? For example, did the customer service module actually reduce ticket resolution time? (Kirkpatrick & Kirkpatrick, 2016).

Knowledge Retention Over Time: Utilizing spaced repetition analytics to see if the knowledge survives 30, 60, or 90 days post-training.

How to Make the Shift in Your Learning Designs

Transitioning from vanity to value requires a systemic shift in how we approach the entire instructional design lifecycle.

1. Start with the End in Mind (Action Mapping) Before developing a single slide or video, define the specific, observable behavior you want to change. If the goal cannot be measured in the physical or digital workspace, it is not a valid learning objective.

2. Design for “Learning in the Flow of Work” (LIFOW) Move away from marathon e-learning courses. Value metrics thrive when learning is delivered precisely at the moment of need. Micro-learning interventions and performance support tools are much easier to track against specific workplace tasks.

3. Leverage True Data Analytics Move beyond basic LMS reporting. By integrating learning records with actual performance data (like sales metrics, error rates, or productivity logs), you can establish a definitive correlation between the learning intervention and real-world results.

Conclusion

The ultimate goal of learning design is human growth and improved performance. When we strip away the vanity metrics, we are left with the undeniable truth of our data. Embracing value metrics is not just about proving the worth of L&D; it is about respecting the learner’s time and ensuring that every educational intervention drives meaningful, measurable change.

References

Dirksen, J. (2015). Design for how people learn (2nd ed.). New Riders.

Kirkpatrick, D. L., & Kirkpatrick, J. D. (2016). Evaluating training programs: The four levels (3rd ed.). Berrett-Koehler Publishers.

Thalheimer, W. (2016). Performance-focused smile sheets: A radical rethinking of a dangerous art form. Work-Learning Press.